Your app works perfectly in the Android emulator. You ship it. Within hours, 1-star reviews roll in: “App crashes on my Samsung Galaxy S24” and “Battery drains in 10 minutes.”

The emulator missed bugs that real users found immediately.

This is the emulator vs real device testing problem. Emulators are fast and cheap. Real devices catch bugs that emulators miss. The question isn’t which to use—it’s when to use each.

What Emulators Miss

Emulators consistently miss device-specific bugs that real devices catch. These include:

- Hardware interaction bugs: Camera, GPS, fingerprint sensor, NFC

- Performance issues: Battery drain, memory pressure, thermal throttling

- Network edge cases: Carrier-specific behavior, real-world latency

- Touch responsiveness: Gesture accuracy, multi-touch, pressure sensitivity

- Manufacturer customizations: Samsung One UI, Xiaomi MIUI, Huawei EMUI

If your QA process only uses emulators, you’re shipping with significant blind spots. This also affects Appium test speed and reliability.

Emulators vs Simulators vs Real Devices

First, let’s clarify the terminology (BrowserStack guide, Android developer docs, Apple Simulator docs):

| Type | What It Is | Examples | Hardware Replication |

|---|---|---|---|

| Emulator | Software that replicates hardware + OS | Android Studio Emulator | Yes (CPU, memory) |

| Simulator | Software that replicates OS behavior only | iOS Simulator | No |

| Real Device | Physical smartphone or tablet | iPhone 15, Pixel 8 | N/A (it’s real) |

The Android emulator actually emulates ARM processor instructions on your x86/x64 computer. The iOS simulator only simulates iOS APIs—it runs native Mac code, not actual iOS binaries.

This distinction matters: Android emulators are more accurate than iOS simulators.

When Emulators Work Well

Emulators excel at fast feedback during development:

Good Use Cases for Emulators

- Unit test execution: Logic tests that don’t touch device hardware

- UI layout testing: Verifying designs across screen sizes

- Smoke testing: Quick sanity checks during development

- Debugging: Easier to attach debuggers and inspect state

- CI pipeline checks: Fast automated runs for every commit

- Screenshot generation: Capturing app states for documentation

Emulator Advantages

| Factor | Emulator | Real Device |

|---|---|---|

| Startup time | 5-15 seconds | N/A (always ready) |

| Cost | Free | $200-1500 per device |

| Availability | Unlimited instances | Limited by inventory |

| Configuration | Any OS version, screen size | Fixed to physical device |

| CI integration | Easy | Requires device management |

| Debugging | Excellent | Good |

Practical Emulator Strategy

Use emulators for your high-volume, fast-feedback tests:

# CI Pipeline Example

test:

stage: test

script:

# Run on emulators for speed

- ./gradlew testDebugUnitTest # Unit tests

- ./emulator -avd Pixel_6_API_34 -no-window &

- ./gradlew connectedDebugAndroidTest # Smoke tests

Target: 70-80% of test execution on emulators.

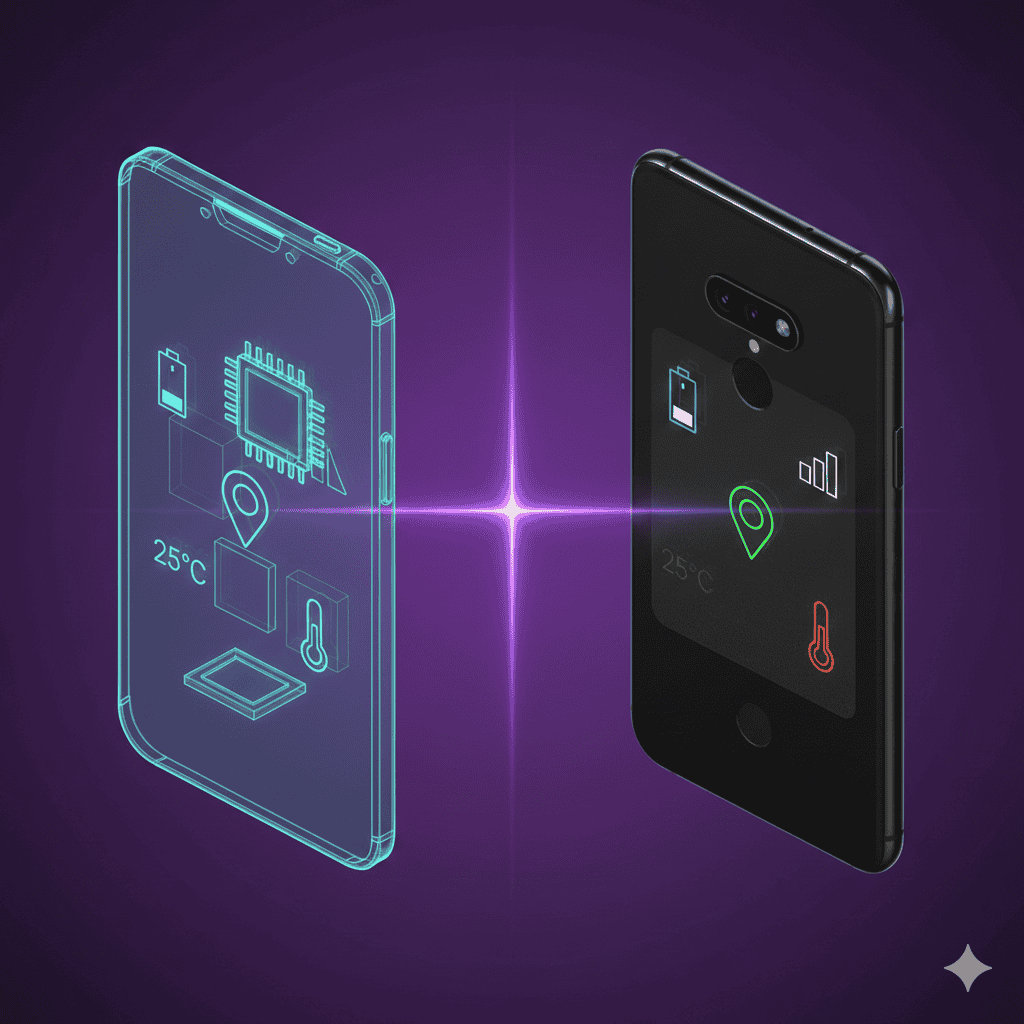

When Emulators Fail

Here’s what emulators can’t replicate accurately:

1. Hardware Sensors

Emulators simulate sensor data, but the simulation rarely matches reality:

| Sensor | Emulator Behavior | Real Device Behavior |

|---|---|---|

| GPS | Perfect coordinates instantly | Acquisition time, drift, accuracy variance |

| Camera | Static image or simple capture | Focus time, exposure adjustment, lighting response |

| Accelerometer | Smooth synthetic data | Noise, calibration variance |

| Fingerprint | Mock success/failure | Timing, partial matches, moisture issues |

| Bluetooth | Limited or no support | Pairing issues, range limitations |

Bug example: An app that uses GPS for delivery tracking worked perfectly in the emulator but failed in dense urban areas where GPS signal bounced between buildings.

2. Performance Under Real Conditions

Emulators run on powerful development machines with abundant resources:

| Resource | Emulator | Real Device |

|---|---|---|

| CPU | Your Mac/PC CPU | Mobile chip (throttles under heat) |

| Memory | Allocated from 16-32GB RAM | Fixed 4-8GB with OS overhead |

| Battery | N/A (unlimited) | Depletes, affects performance |

| Thermal | N/A | Throttles when hot |

| Storage I/O | SSD speeds | Slower flash storage |

Bug example: An app that processed video ran smoothly in the emulator but caused thermal throttling and frame drops on mid-range phones in summer heat.

3. Network Reality

Emulators use your development machine’s network:

Emulator network:

- WiFi: Fast, stable

- No carrier simulation

- No network handoffs

- No real-world latency

Real device network:

- Variable 4G/5G signal

- Carrier-specific behavior

- Network handoffs (WiFi → cellular)

- Packet loss, latency spikes

Bug example: An app with aggressive API polling worked fine on development WiFi but drained battery rapidly on cellular and caused timeouts on slow 3G connections.

4. Manufacturer Customizations

Android manufacturers modify the OS in ways emulators can’t replicate:

- Samsung One UI: Different notification handling, power management

- Xiaomi MIUI: Aggressive background app killing

- Huawei EMUI: Custom permissions system

- OnePlus OxygenOS: Different RAM management

Bug example: An app’s background sync worked on stock Android emulator but was killed by Xiaomi’s battery optimization. 40% of users (Xiaomi market share in some regions) couldn’t receive notifications.

5. Touch and Gesture Accuracy

Mouse clicks on emulators don’t match finger taps:

| Interaction | Emulator | Real Device |

|---|---|---|

| Tap accuracy | Pixel-perfect mouse | Fat finger variance |

| Multi-touch | Limited support | Full gesture range |

| Pressure sensitivity | None | Force Touch, 3D Touch |

| Swipe velocity | Synthetic | Natural variance |

| Edge gestures | Often buggy | System-level handling |

Bug example: A drawing app worked perfectly with mouse input in emulators but had jitter and accuracy issues with actual finger input on real screens.

Real Device Testing: Where It’s Essential

Pre-Release Validation

Before any app store submission, test on real devices:

Minimum real device coverage:

├── iOS

│ ├── Latest iPhone (iPhone 15 Pro)

│ ├── Previous generation (iPhone 14)

│ ├── Budget model (iPhone SE)

│ └── iPad (different screen ratio)

├── Android

│ ├── Latest Pixel (Pixel 8)

│ ├── Latest Samsung (Galaxy S24)

│ ├── Mid-range (Samsung Galaxy A54)

│ └── Budget (Redmi Note 13)

Performance Testing

Real device performance metrics can’t be simulated:

// Performance test that MUST run on real devices

@Test

void testAppStartupTime() {

long start = System.currentTimeMillis();

launchApp();

waitForHomeScreen();

long startupTime = System.currentTimeMillis() - start;

// This assertion is meaningless on emulators

assertThat(startupTime).isLessThan(3000);

}

@Test

void testBatteryImpact() {

int batteryBefore = getBatteryPercentage();

runAppFor30Minutes();

int batteryAfter = getBatteryPercentage();

// Battery test impossible on emulators

assertThat(batteryBefore - batteryAfter).isLessThan(10);

}

Hardware Feature Testing

Any app that uses device hardware needs real device testing:

- Camera apps: Focus, exposure, image quality

- Fitness apps: Accelerometer, GPS tracking

- Payment apps: NFC, biometric authentication

- AR apps: Camera + sensors combined

- Location apps: GPS accuracy and battery impact

Accessibility Testing

Real device accessibility features behave differently:

- Screen readers: VoiceOver (iOS) and TalkBack (Android) timing and feedback

- Display settings: Font scaling, color inversion, reduced motion

- Switch control: Physical accessibility devices

- Voice control: Speech recognition accuracy

The Hybrid Strategy

The optimal approach combines emulators and real devices:

Testing Pyramid for Mobile

/\

/ \ Real Device:

/ \ • Pre-release validation

/ \ • Performance testing

/--------\ • Hardware features

/ \

/ Cloud \ Cloud Real Devices:

/ Devices \ • Cross-device coverage

/ \ • Regression testing

/------------------\

/ \

/ Emulators \ Emulators:

/ \ • Unit tests

/ \ • UI tests

/ \ • CI/CD smoke tests

/______________________________\

Recommended Split

| Test Type | Emulator | Cloud Real Devices | Local Real Devices |

|---|---|---|---|

| Unit tests | 100% | 0% | 0% |

| UI component tests | 100% | 0% | 0% |

| Integration tests | 70% | 30% | 0% |

| E2E smoke tests | 50% | 30% | 20% |

| E2E regression | 20% | 50% | 30% |

| Performance tests | 0% | 20% | 80% |

| Pre-release validation | 0% | 30% | 70% |

Implementation

Daily CI (on every commit):

# Run on emulators for speed

- Lint checks

- Unit tests

- UI tests (Espresso/XCUITest)

- Smoke tests (critical paths)

Nightly CI (once per day):

# Run on real devices for coverage

- Full regression suite

- Cross-device compatibility

- Performance benchmarks

Pre-release (before app store submission):

# Run on local real devices for accuracy

- Full manual exploratory testing

- Hardware feature verification

- Performance profiling

- Accessibility audit

Cloud vs Local Real Devices

Both options have trade-offs:

Cloud Real Device Platforms

Providers: BrowserStack, LambdaTest (TestMu AI), Sauce Labs, Headspin (see our BrowserStack vs LambdaTest comparison)

Advantages:

- Wide device selection (1000+ models)

- No device procurement or maintenance

- Global device locations

- Easy CI/CD integration

Disadvantages:

- Network latency (100-500ms per command)

- Shared devices (previous user’s state)

- Can’t test localhost without tunnels

- Expensive at scale ($200-300/parallel session/month) — see BrowserStack pricing breakdown

Local Real Devices

Advantages:

- Sub-50ms latency

- Full control over device state

- Direct localhost access

- One-time device cost

Disadvantages:

- Device procurement and maintenance

- Limited model selection

- Physical space requirements

- Device management complexity

Cost Comparison (10 parallel devices, annual)

| Approach | Year 1 | Year 2 | Year 3 | 3-Year Total |

|---|---|---|---|---|

| BrowserStack | $24,000 | $24,000 | $24,000 | $72,000 |

| LambdaTest | $19,000 | $19,000 | $19,000 | $57,000 |

| Own Devices + DeviceLab | $15,000 | $12,000 | $12,000 | $39,000 |

Local devices become cost-effective after 1-2 years if you run tests frequently.

Choosing Your Device Coverage

You can’t test on every device. Here’s how to prioritize:

Analytics-Driven Selection

Use your Firebase/Analytics data to identify:

- Top 10 device models by active users

- Top 5 OS versions

- Devices with highest crash rates

- Devices with lowest user ratings

Minimum Viable Coverage

High-priority devices (must test):

├── iOS: Latest iPhone, iPhone-1 year, iPhone SE

├── Android: Latest Pixel, Latest Samsung flagship

└── Android: One mid-range device (Galaxy A series)

Medium-priority (test weekly):

├── iOS: iPads (if you support tablets)

├── Android: OnePlus, Xiaomi (regional markets)

└── Android: Low-memory devices (2-3GB RAM)

Nice-to-have (test before major releases):

├── Older iOS versions (iOS-2)

├── Foldables (Galaxy Fold)

└── Region-specific devices

Frequently Asked Questions

Can emulators replace real device testing?

No. Emulators are useful for early development and quick feedback, but they cannot replicate real-world conditions like battery drain, network variability, GPS accuracy, or hardware-specific behavior. Use emulators for 70-80% of tests, real devices for final validation.

What’s the difference between an emulator and simulator?

Emulators replicate both hardware and software (Android Studio Emulator). Simulators replicate only software behavior (iOS Simulator). Android emulators are more accurate than iOS simulators because they emulate the ARM processor.

Do cloud device platforms like BrowserStack use real devices?

Yes, but with caveats. Cloud platforms offer real devices, but network latency (100-500ms per command) can mask performance issues. Your tests run on shared devices that may not reflect typical user conditions.

What bugs can only be found on real devices?

Hardware-specific bugs (GPS, camera, sensors), performance issues (battery drain, memory pressure), network edge cases (carrier variations, slow 3G), and touch responsiveness problems can only be reliably found on real devices.

Summary: Decision Framework

| Scenario | Use Emulator | Use Real Device |

|---|---|---|

| Unit tests | Yes | |

| UI layout tests | Yes | |

| Fast CI feedback | Yes | |

| Device-specific bugs | Yes | |

| Performance testing | Yes | |

| Hardware features | Yes | |

| Pre-release validation | Yes | |

| Cross-device regression | Yes (cloud OK) | |

| Accessibility testing | Yes |

The golden rule: Emulators for speed and convenience. Real devices for accuracy and confidence.

Start with emulators for 80% of tests. Use real devices for the 20% that matters most: performance, hardware features, and final validation before shipping. For test automation, address flaky tests to maximize the value of your device coverage.