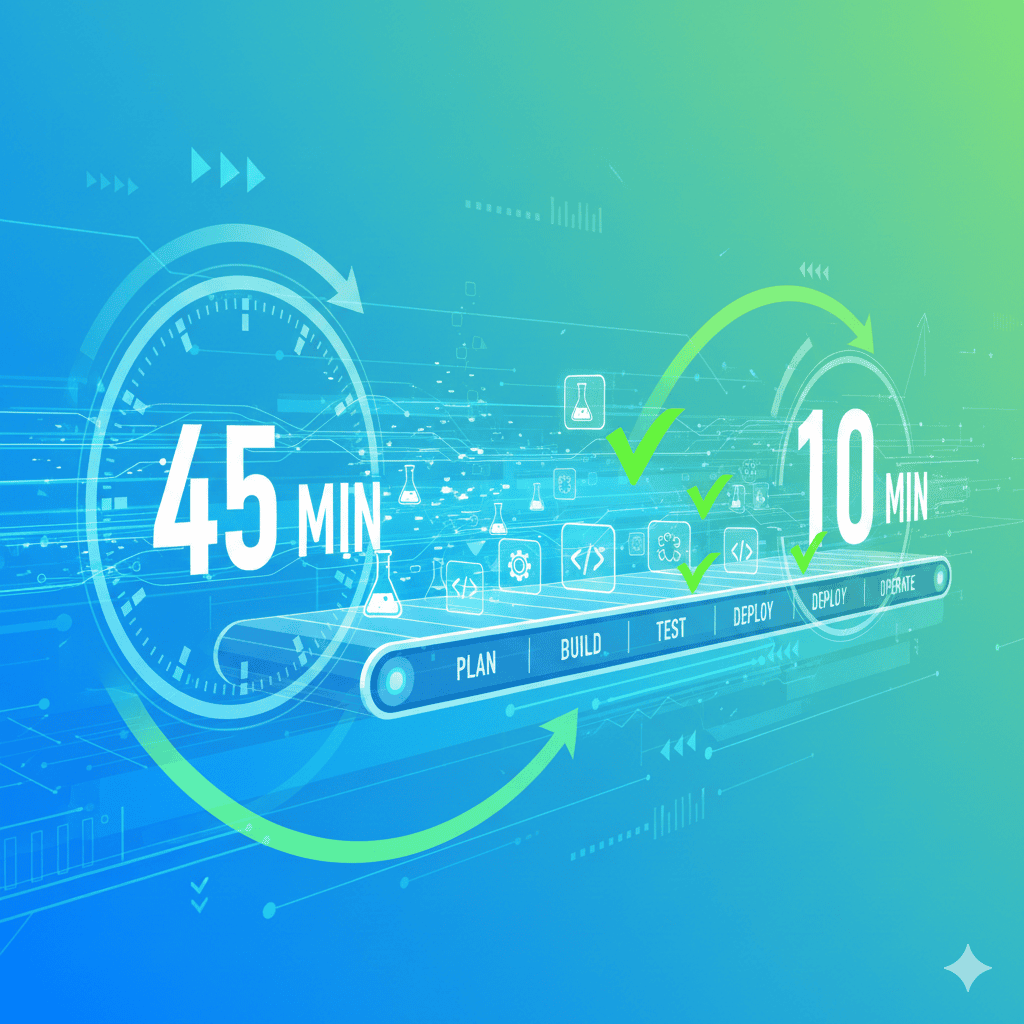

A 45-minute test pipeline isn’t just annoying—it’s expensive. Engineers context-switch, bugs ship, and releases slow to a crawl.

Google and Meta target sub-10-minute CI feedback loops. Most teams aren’t even close.

This guide identifies the bottlenecks killing your mobile test pipeline and provides practical fixes to hit that 10-minute target.

The Cost of Slow Pipelines

Before diving into solutions, let’s quantify the problem.

Developer time waste:

A 45-minute pipeline means engineers either wait (wasting $100+/hour in salary) or context-switch (proven to reduce productivity by 23%). At 10 builds per day across a 10-person team, that’s 75 hours of waiting per week.

Compounding delays:

When CI is slow, developers batch changes to avoid triggering it. Larger batches mean harder debugging. Harder debugging means longer fix times. Longer fix times mean more batching. The cycle feeds itself.

Release velocity:

Netflix found that performance metrics guide development priorities, leading to a 43% reduction in stream start times over two years. Fast pipelines enable fast iteration.

The benchmark:

| Metric | Target | Needs Work |

|---|---|---|

| CI feedback | <10 minutes | >10 minutes |

| CD deployment | <20 minutes | >20 minutes |

| Flaky test rate | <5% | >10% |

For more on the hidden costs of test unreliability, see The Real Cost of Flaky Mobile Tests.

Where Time Goes: The Bottlenecks

1. Sequential Test Execution

The biggest time sink. If 100 tests run one after another at 30 seconds each, that’s 50 minutes. Run them in parallel across 10 devices and it’s 5 minutes.

Signs you have this problem:

- Your pipeline shows tests running one-by-one

- Adding more tests linearly increases pipeline time

- Device utilization is low (one device active, others idle)

2. Large Monolithic Test Suites

Some teams run their entire E2E suite on every commit. That might be 500 tests taking 2 hours.

The testing pyramid principle:

/\ <- Few E2E tests (slow, expensive)

/ \

/----\ <- Some integration tests

/ \

/--------\ <- Many unit tests (fast, cheap)

If your pyramid is inverted (more E2E than unit tests), your pipeline will always be slow.

3. Device Queue Wait Times

Cloud device farms have limited inventory. During peak hours, your tests might queue for 5-10 minutes waiting for devices.

Signs of queue problems:

- Pipeline duration varies by time of day

- “Waiting for device” messages in logs

- Faster results on weekends

This is one reason teams consider private device labs over shared cloud infrastructure.

4. Slow Test Setup/Teardown

Every test might:

- Install the app (30+ seconds)

- Log in (5-10 seconds)

- Navigate to the test area (10-20 seconds)

- Clean up after (10+ seconds)

Multiply by 100 tests and setup/teardown dominates execution time.

5. Flaky Tests

A test that fails randomly 10% of the time will:

- Require investigation (wasted time)

- Need reruns (doubled execution)

- Train developers to ignore failures (dangerous)

Microsoft calculated flaky tests cost them $1.14 million per year in engineering time. For solutions, see Appium Flaky Tests Complete Fix.

6. Inefficient Locators

XPath queries that traverse the entire view hierarchy are slow:

# Slow: traverses entire tree

driver.find_element('xpath', '//android.widget.LinearLayout/android.widget.FrameLayout/android.view.View/android.widget.Button[@text="Login"]')

# Fast: direct ID lookup

driver.find_element('id', 'loginButton')

This seems minor but compounds across thousands of operations. For more optimization tips, see Appium Slow: 7 Fixes That Work.

Fix 1: Parallel Testing

The highest-impact optimization. Transform serial execution into parallel execution.

How it works:

Instead of:

Device 1: Test 1 → Test 2 → Test 3 → ... → Test 100

Total time: 100 × 30s = 50 minutes

Do this:

Device 1: Test 1 → Test 11 → Test 21 → ... → Test 91

Device 2: Test 2 → Test 12 → Test 22 → ... → Test 92

Device 3: Test 3 → Test 13 → Test 23 → ... → Test 93

...

Device 10: Test 10 → Test 20 → Test 30 → ... → Test 100

Total time: 10 × 30s = 5 minutes

Implementation approaches:

- Cloud device farms: BrowserStack, Sauce Labs, LambdaTest support parallel execution out of the box

- CI-level parallelism: GitHub Actions, CircleCI, GitLab allow matrix builds

- Framework-level: JUnit 5, pytest-xdist support parallel test execution

GitHub Actions example:

jobs:

test:

strategy:

matrix:

device: [pixel-6, samsung-s21, pixel-8, samsung-s23]

steps:

- name: Run tests on ${{ matrix.device }}

run: ./gradlew connectedAndroidTest -Pdevice=${{ matrix.device }}

Fix 2: Test Sharding

Even better than simple parallelism: split tests by expected duration so each shard finishes at roughly the same time.

The problem with naive splitting:

Shard 1: 10 fast tests (2 minutes total)

Shard 2: 10 slow tests (20 minutes total)

Total time: 20 minutes (limited by slowest shard)

Smart sharding:

Use historical execution data to balance shards:

Shard 1: Mix of fast and slow (11 minutes)

Shard 2: Mix of fast and slow (11 minutes)

Total time: 11 minutes

Tools that support smart sharding:

- CircleCI’s test splitting

- Launchable’s predictive test selection

- Custom scripts using test timing data

Fix 3: Test Prioritization

Not all tests need to run on every commit. Prioritize by impact:

Tier 1: Every commit (must be fast)

- Unit tests

- Critical path smoke tests

- Tests for changed code

Tier 2: Every merge to main

- Integration tests

- API tests

- Feature-level E2E

Tier 3: Nightly/weekly

- Full regression suite

- Performance tests

- Edge case coverage

Selective test execution:

Run only tests affected by code changes:

# Run tests only for changed modules

- name: Detect changes

id: changes

run: echo "modules=$(git diff --name-only HEAD~1 | grep -oP 'modules/\K[^/]+' | sort -u | tr '\n' ',')" >> $GITHUB_OUTPUT

- name: Run affected tests

run: ./gradlew test -Pmodules=${{ steps.changes.outputs.modules }}

Fix 4: Caching and Incremental Builds

Stop rebuilding what hasn’t changed.

Cache dependencies:

# GitHub Actions

- uses: actions/cache@v3

with:

path: |

~/.gradle/caches

~/.gradle/wrapper

key: gradle-${{ hashFiles('**/*.gradle*', '**/gradle-wrapper.properties') }}

Incremental compilation:

Most build tools support this:

# Gradle incremental build

./gradlew assembleDebug # Rebuilds only changed code

Cache test results:

If tests passed and neither test code nor app code changed, skip them:

- name: Check if tests need to run

id: test-cache

uses: actions/cache@v3

with:

path: test-results

key: tests-${{ hashFiles('app/src/**', 'tests/**') }}

- name: Run tests

if: steps.test-cache.outputs.cache-hit != 'true'

run: ./gradlew test

Fix 5: Reduce Test Setup Time

Every second saved on setup multiplies by test count.

Use test fixtures:

Instead of logging in for every test:

# Bad: Login in every test

def test_feature_1():

login()

test_something()

def test_feature_2():

login()

test_something_else()

# Good: Login once per session

@pytest.fixture(scope="session")

def authenticated_user():

return login()

def test_feature_1(authenticated_user):

test_something()

def test_feature_2(authenticated_user):

test_something_else()

Don’t reinstall the app between tests:

# Appium capability to keep app installed

caps['noReset'] = True

caps['fullReset'] = False

Use app deep links:

Instead of navigating through UI to reach a screen, deep link directly:

# Slow: Navigate through menu

driver.find_element('id', 'menu').click()

driver.find_element('id', 'settings').click()

driver.find_element('id', 'account').click()

# Fast: Deep link

driver.execute_script('mobile: deepLink', {'url': 'myapp://settings/account'})

Fix 6: Eliminate Flaky Tests

Flaky tests require investigation and reruns—both waste time.

Quarantine flaky tests:

# Run flaky tests separately, don't block pipeline

- name: Run stable tests

run: pytest tests/ -m "not flaky"

- name: Run flaky tests (non-blocking)

run: pytest tests/ -m "flaky" || true

Fix root causes:

Most flakiness comes from:

- Timing issues: Use explicit waits, not sleep()

- Test order dependencies: Ensure tests are independent

- Shared state: Reset app state between tests

- Network variability: Mock external services

Track flaky test metrics:

# Record test stability over time

def pytest_runtest_makereport(item, call):

if call.when == "call":

record_test_result(

test_name=item.nodeid,

passed=call.excinfo is None,

duration=call.stop - call.start

)

Fix 7: Use Emulators Strategically

Real devices are expensive and limited. Emulators are fast and scalable. For a detailed comparison, see Emulator vs Real Device Testing 2026.

When to use emulators:

- Unit tests (no device-specific behavior)

- UI tests for logic validation

- Development feedback loops

- Parallel scaling (spin up unlimited emulators)

When to use real devices:

- Final E2E validation

- Performance testing

- Device-specific bug reproduction

- Camera, GPS, sensors testing

Hybrid approach:

jobs:

fast-feedback:

runs-on: ubuntu-latest

steps:

- name: Run tests on emulator

run: |

./start-emulator.sh

./gradlew connectedAndroidTest

real-device-validation:

needs: fast-feedback

steps:

- name: Run smoke tests on real devices

run: |

browserstack-cli run tests/smoke --devices "Pixel 8, Samsung S24"

Measuring Progress

Track these metrics to know if optimizations are working:

| Metric | How to Measure | Target |

|---|---|---|

| Pipeline duration | CI dashboard | <10 min |

| Flaky test rate | Failed tests / Total runs | <5% |

| Device wait time | Time from trigger to test start | <30 sec |

| Test execution time | Sum of all test durations | Decreasing trend |

| Parallel efficiency | Total test time / Wall clock time | >80% |

DORA metrics for testing:

- Lead time for changes: How long from commit to production?

- Deployment frequency: How often can you deploy?

- Change failure rate: What % of deployments fail?

- Mean time to recover: How quickly do you fix failures?

Slow pipelines hurt all four metrics.

Quick Wins Checklist

If you can only do a few things, start here:

- Enable parallel test execution (biggest impact)

- Cache dependencies and build artifacts

- Quarantine flaky tests immediately

- Use emulators for fast feedback, real devices for validation

- Replace sleep() with explicit waits everywhere

- Use ID locators instead of XPath

- Skip test setup when possible (noReset, deep links)

- Run only affected tests on each commit

The 10-Minute Target

Here’s what a sub-10-minute pipeline looks like:

0:00 - Trigger build

0:30 - Dependencies cached, build starts

1:30 - Incremental build complete

2:00 - 10 emulators spin up in parallel

2:30 - Tests sharded and distributed

8:00 - All tests complete

8:30 - Results aggregated, artifacts uploaded

9:00 - Pipeline complete ✅

Compare to a typical slow pipeline:

0:00 - Trigger build

2:00 - Dependencies downloaded

5:00 - Full rebuild complete

6:00 - Wait for device availability

11:00 - First test starts

45:00 - Last test completes

47:00 - Pipeline complete ❌

The difference: parallel execution, caching, smart device allocation, and test sharding.

Bottom Line

Slow mobile test pipelines aren’t inevitable—they’re a symptom of sequential thinking in a parallel world.

The fix is straightforward:

- Parallelize everything possible

- Cache everything that doesn’t change

- Run only what’s needed

- Fix flaky tests instead of ignoring them

The tools exist. The patterns are proven. The only question is whether you’ll invest the time to implement them.

Your target: sub-10-minute CI feedback. Every minute over that is waste.