1,319 Maestro Issues: Data-Driven Dev

We scraped every open Maestro issue and built the entire project around what we found. Two architectural decisions cause most of the pain.

maestro-runner Benchmarks vs Maestro

Head-to-head on 8 real test flows. 2-3.6x faster execution, 13x less RAM, and zero JVM startup tax.

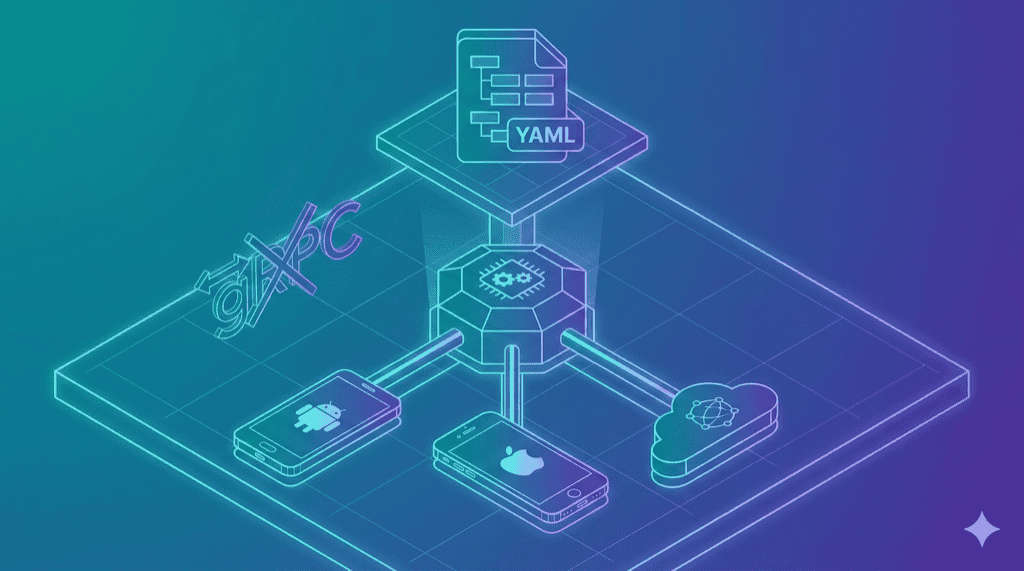

maestro-runner Architecture: Go, No gRPC

Why we chose Go, killed gRPC, and built a three-driver architecture. The technical decisions behind maestro-runner.

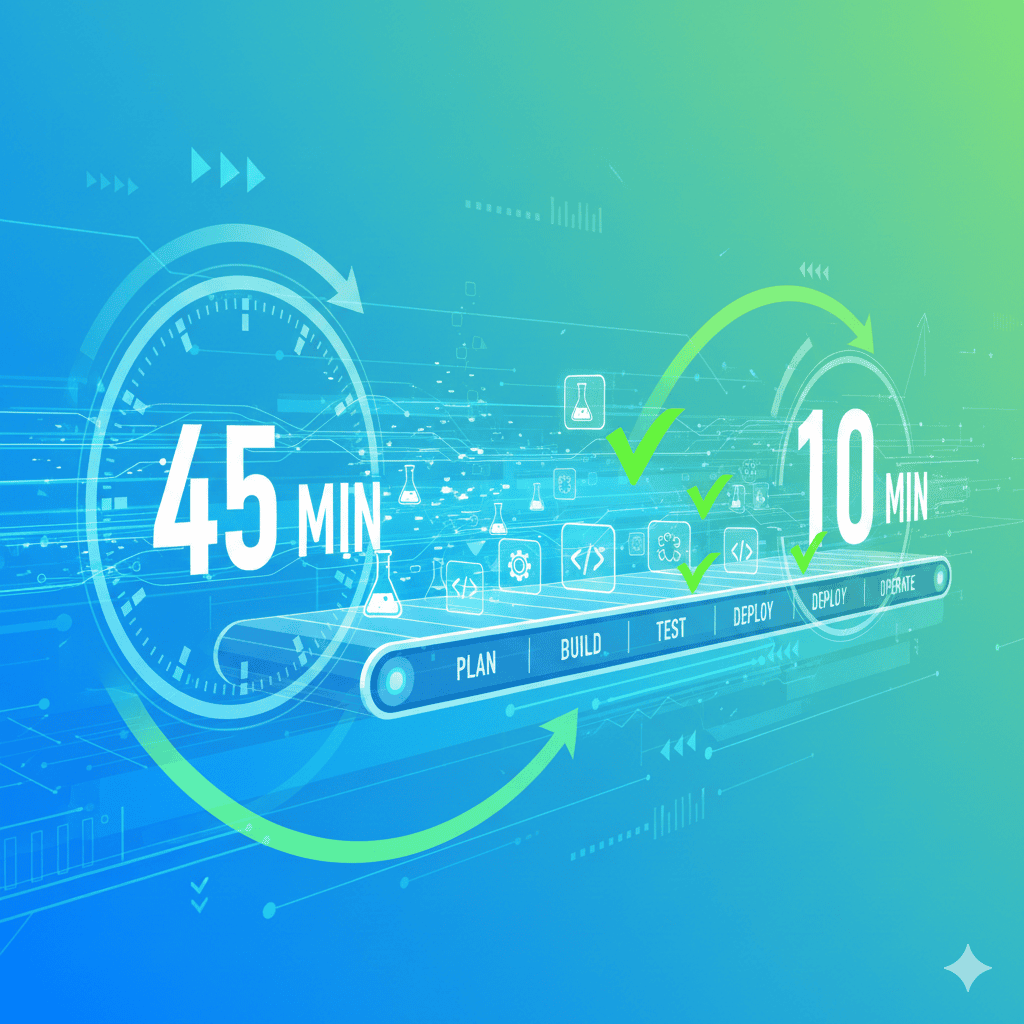

Mobile Testing in CI/CD: Optimize for Speed

45-minute pipelines cost you $100+/hour in developer time. Here's how to hit sub-10-minute feedback loops.

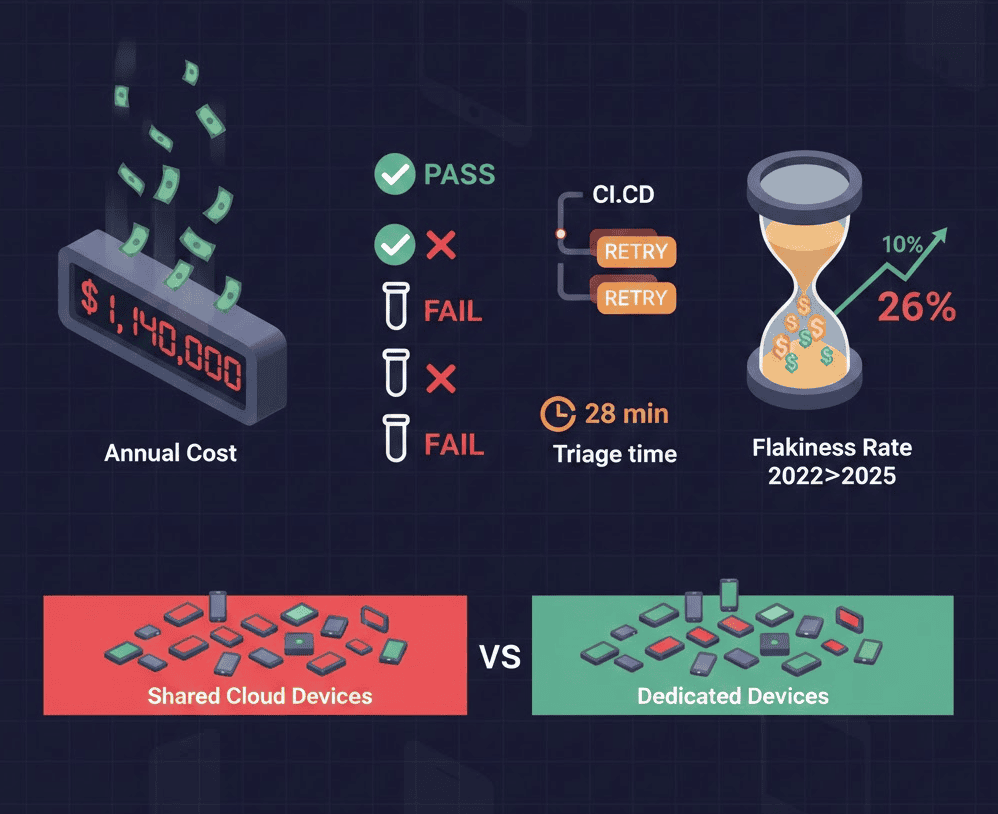

Flaky Mobile Tests Cost $57,600/Year—Here's the

Flaky mobile tests waste 2-4 hours per developer per week. See the full cost breakdown and how teams are fixing it.

Sauce Connect Running Slow? TCP Meltdown

Your Selenium tests run fine locally. But the moment you route them through Sauce Connect, everything slows to a crawl.

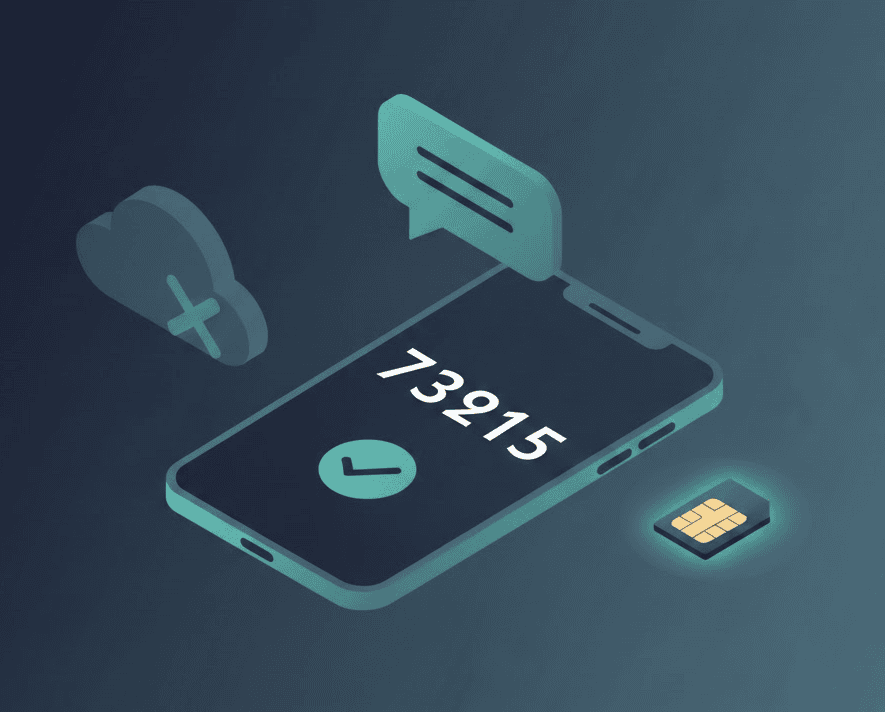

Testing OTP/SMS/2FA on Mobile Devices: Guide

Your staging environment uses 123456 as the OTP. Tests pass. CI is green. Then production users complain: 'I never received the code.'

BrowserStack Local Keeps Dropping? 5 Fixes (And

Your CI pipeline failed again. The error log says 'BrowserStack Local connection dropped.' You're not alone.

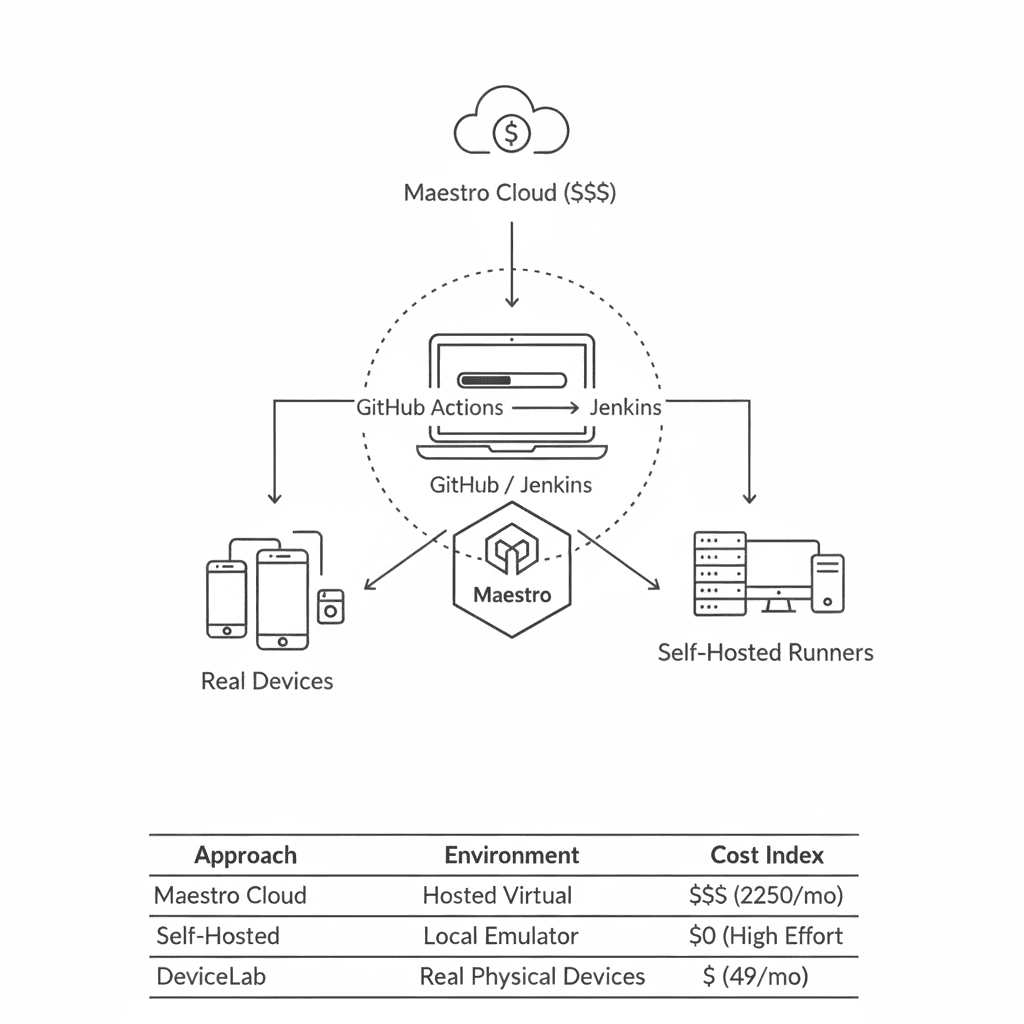

Maestro CI/CD Setup: GitHub Actions + Jenkins

Maestro Cloud costs $250/device/month. Here's how to run Maestro in CI using your own devices—for free.

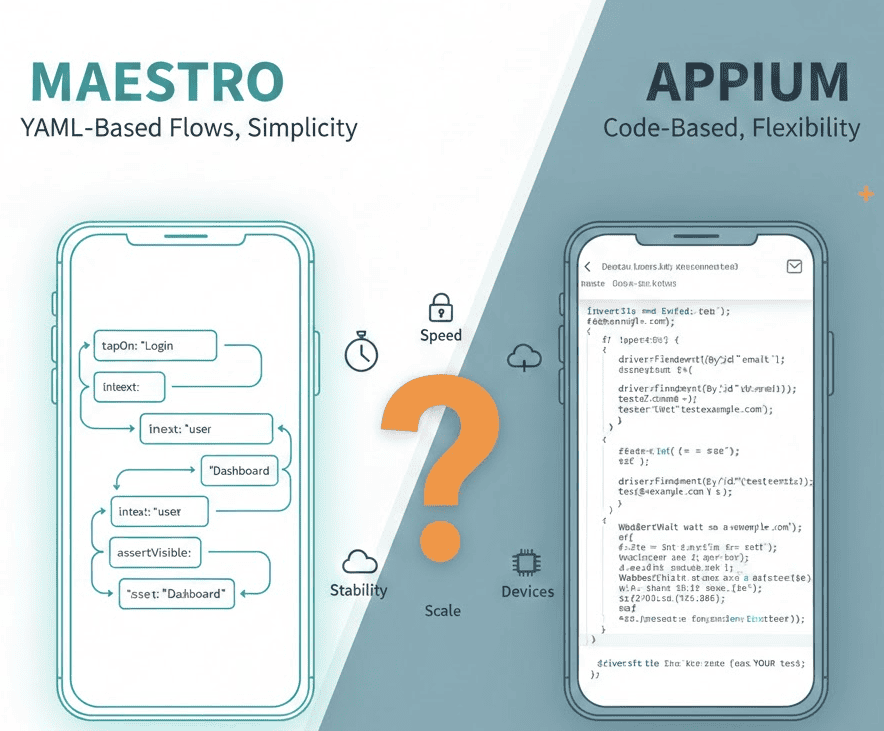

Maestro vs Appium: 10x Faster, But There's a

Maestro runs 10x faster with simpler syntax. But iOS support is limited. Here's the complete picture.

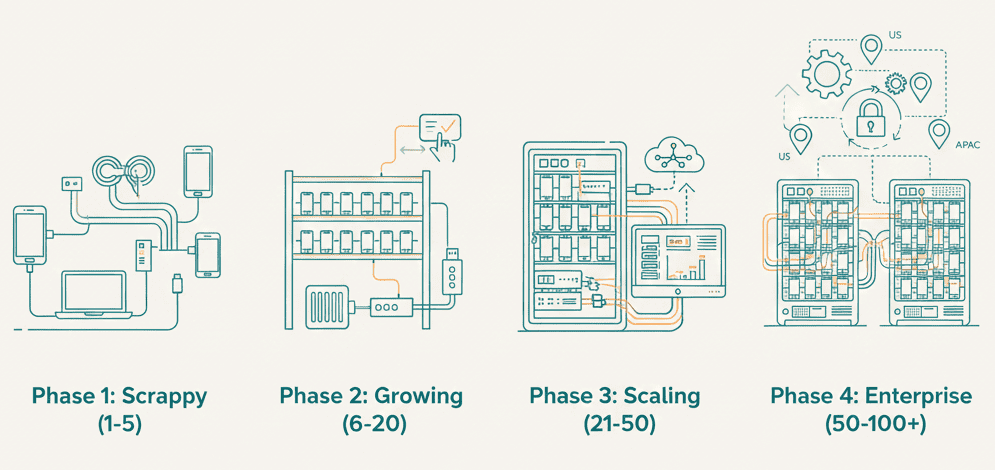

Scaling Mobile Test Infrastructure: From 10 to

The playbook for each growth phase—from your first device to a production-grade device lab.

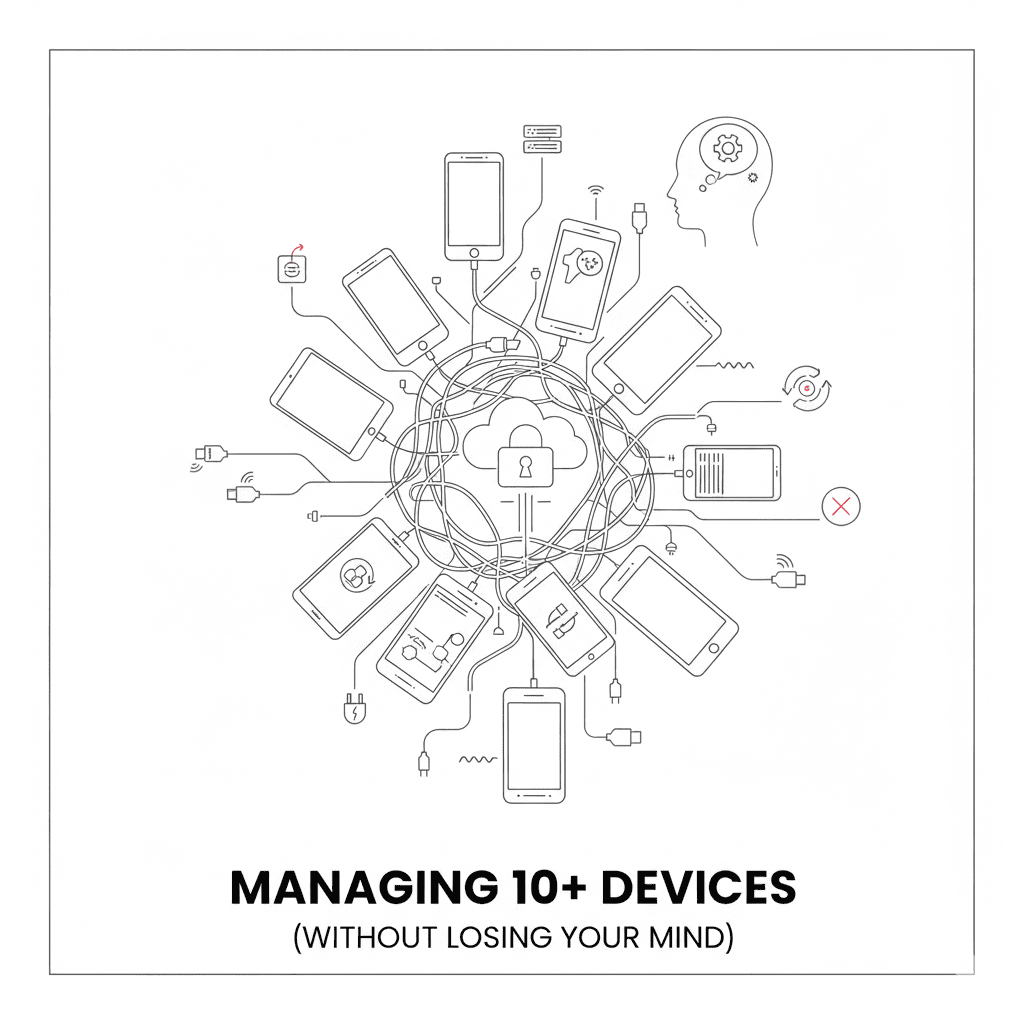

Managing 50+ Test Devices: Automation

USB disconnects, device state drift, parallel test conflicts—here's how to actually scale your device lab.

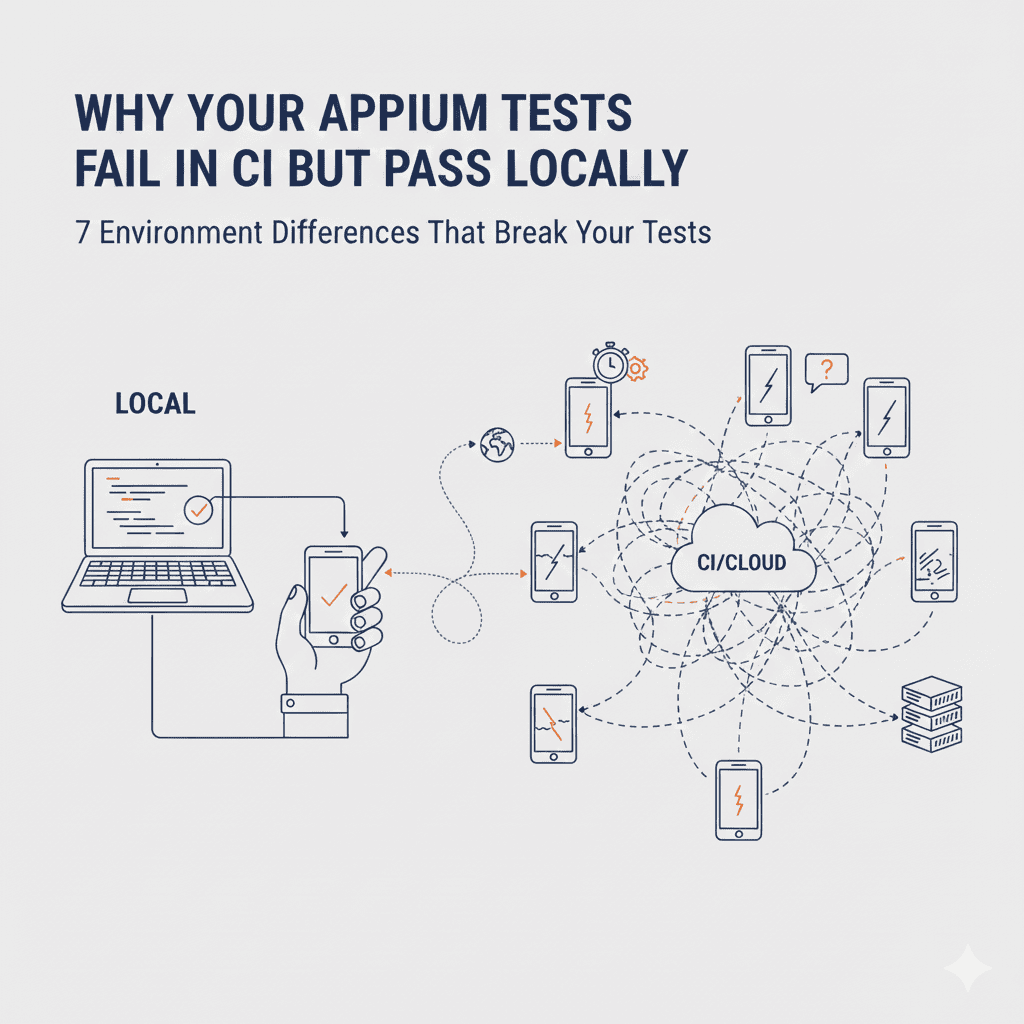

Appium Tests Fail in CI But Pass Locally?

7 environment differences that break your Appium tests in CI and how to fix each one with code examples.

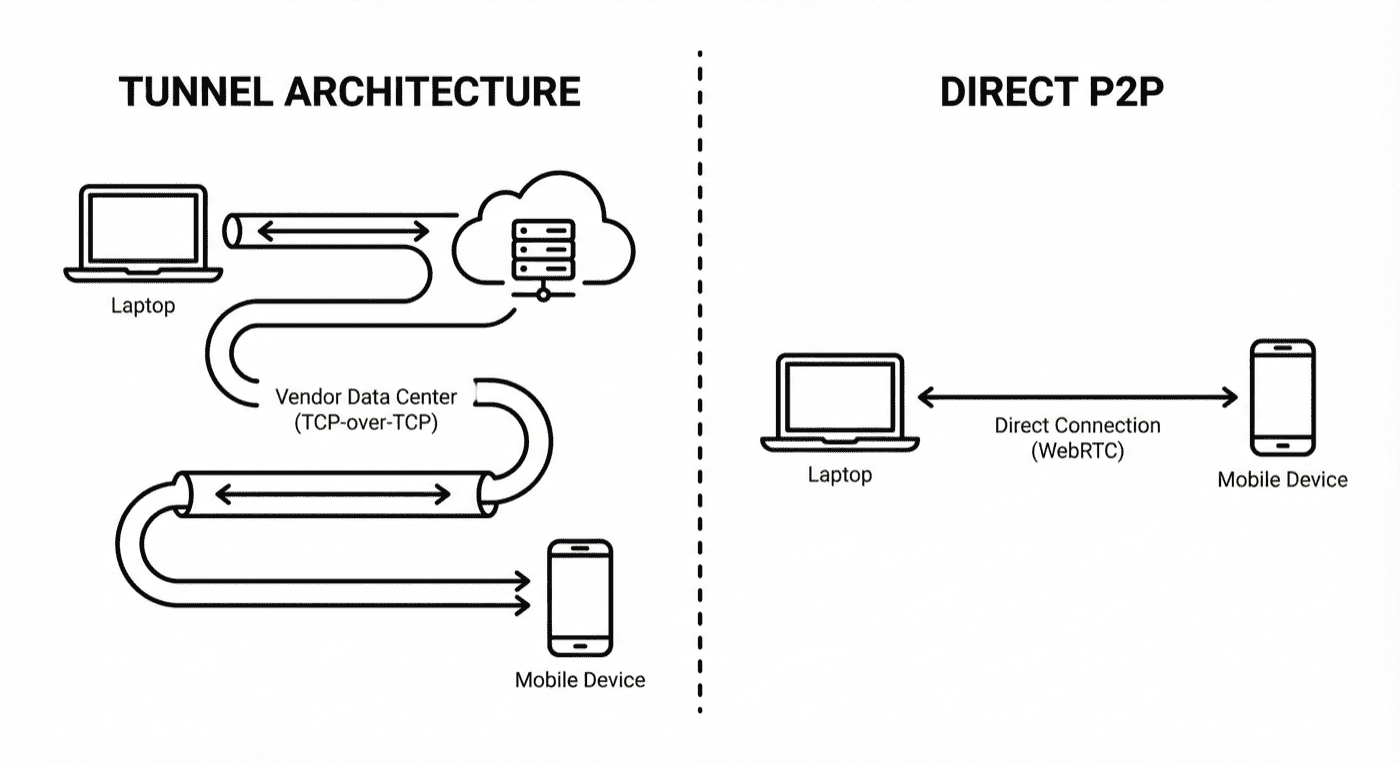

Test Localhost Apps Without Tunnels (No Sauce

Cloud device testing tunnels are slow and flaky. Here's the computer science behind TCP meltdown — and a better approach.

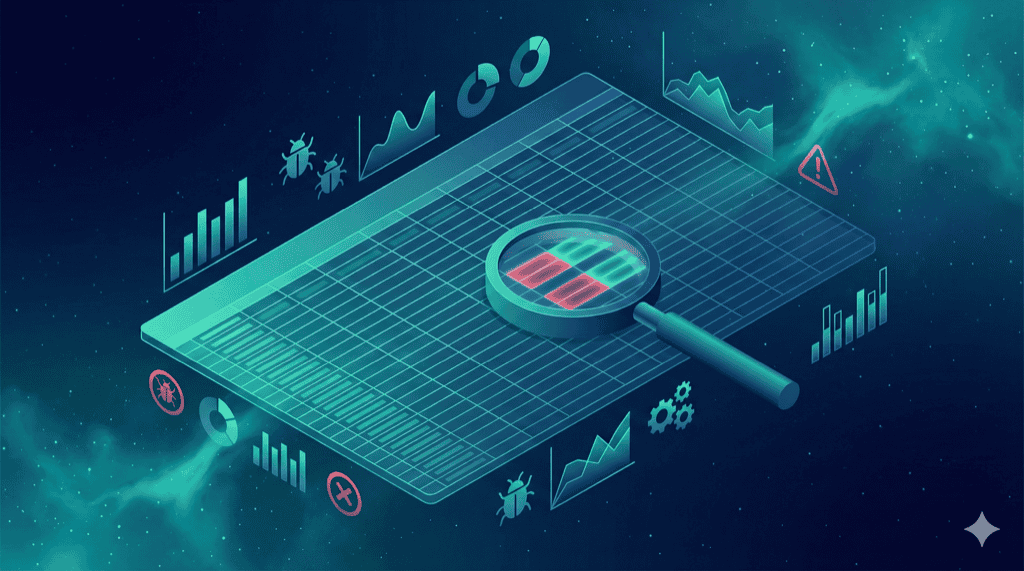

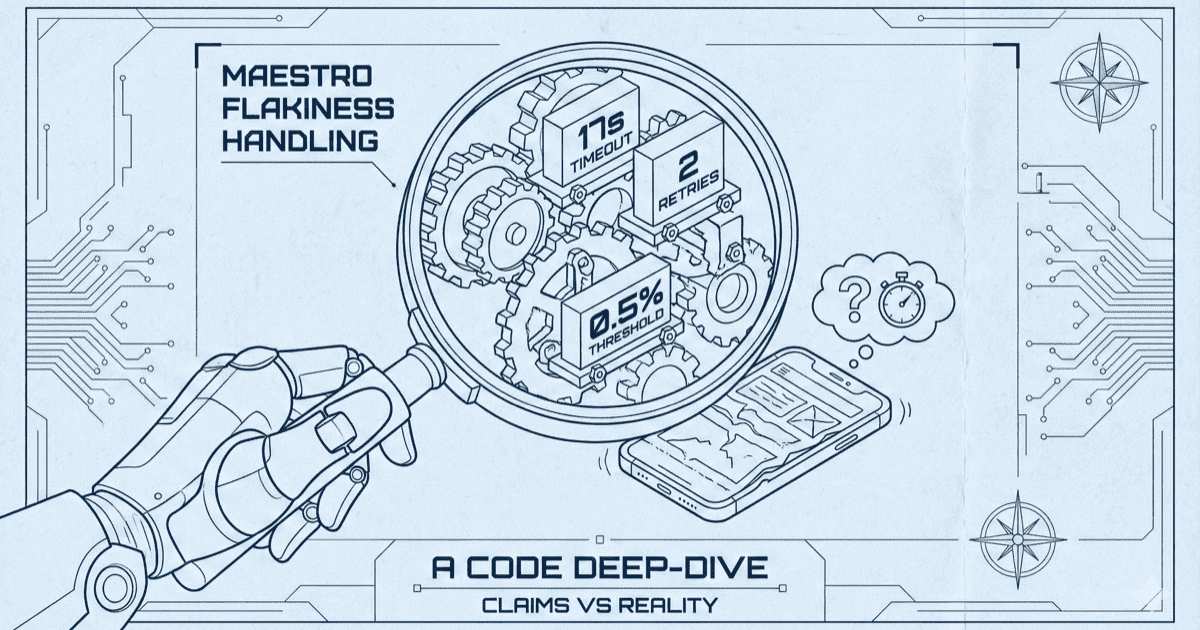

Maestro Flakiness: Deep Dive Into the Source

We analyzed Maestro's source code to see what 'built-in flakiness handling' actually means. Hardcoded timeouts, limited retries, and no configuration.

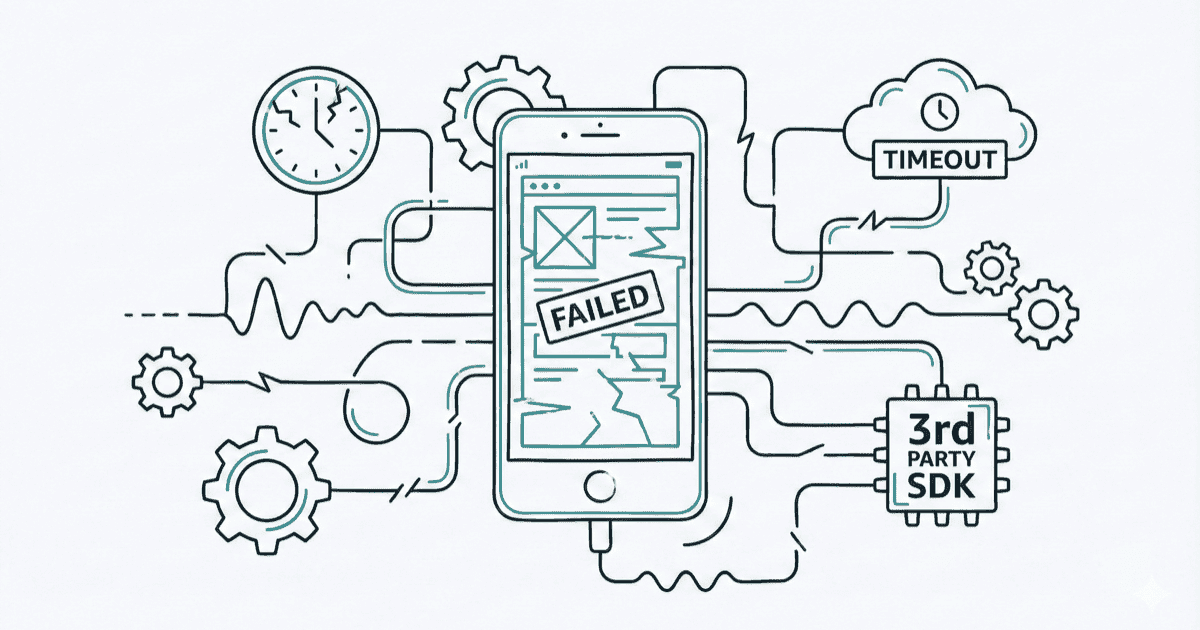

Maestro's Built-In Retry Isn't Enough—Here's

Real scenarios where Maestro's 'automatic' handling breaks down: slow CI, complex animations, third-party SDKs.

Maestro's Open GitHub Issues: 47 Flakiness

Real Maestro users reporting real problems: timeouts ignored, assertions failing on visible elements, taps that don't work.

What Maestro Learned from Appium's Mistakes

10+ years of battle-tested patterns: configurable waits, explicit timeouts, plugin architecture.

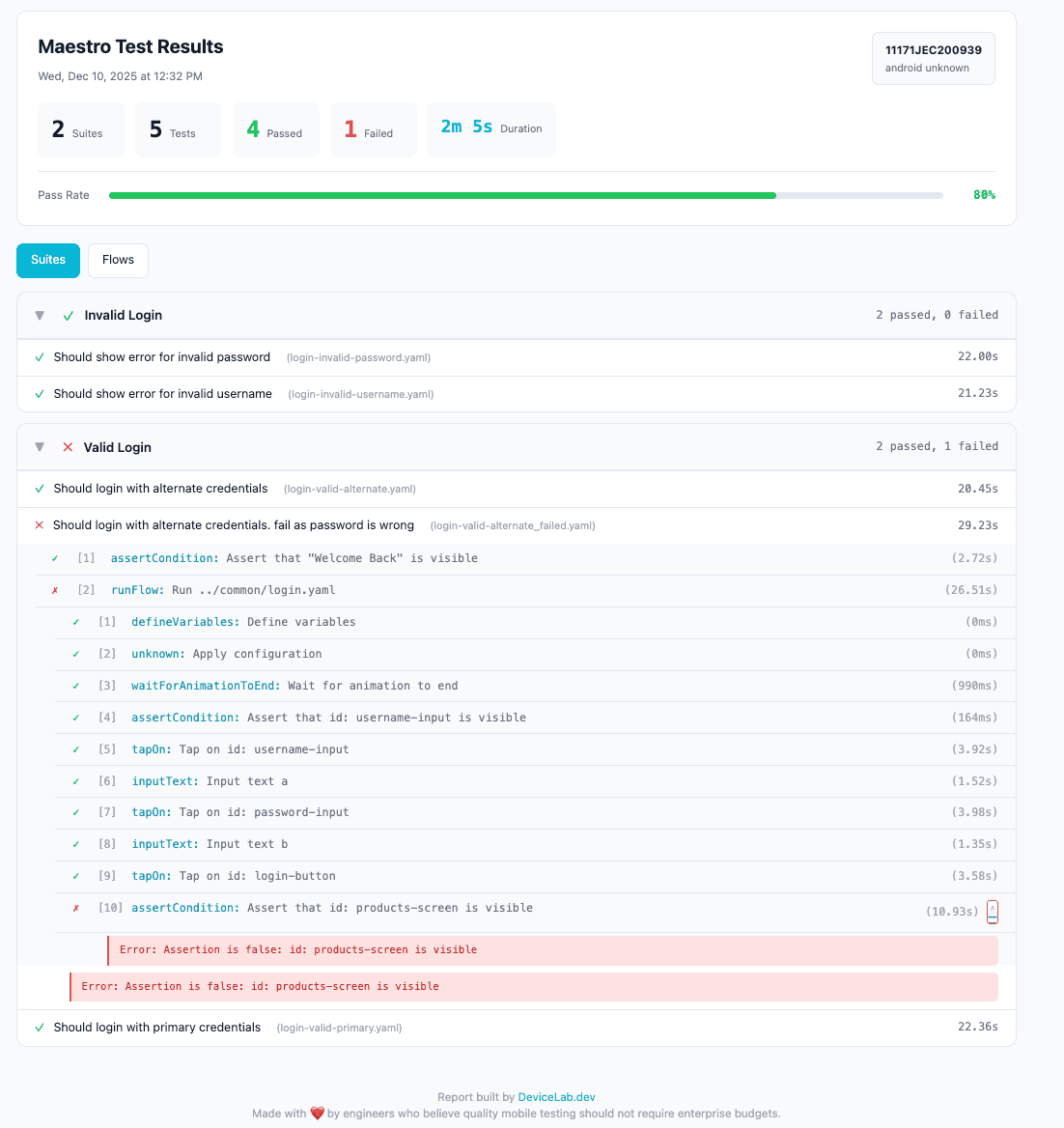

Maestro Reports Are Broken—How to Get JUnit,

Maestro's native reporting is bare-bones. Here's a solution that adds proper reports.

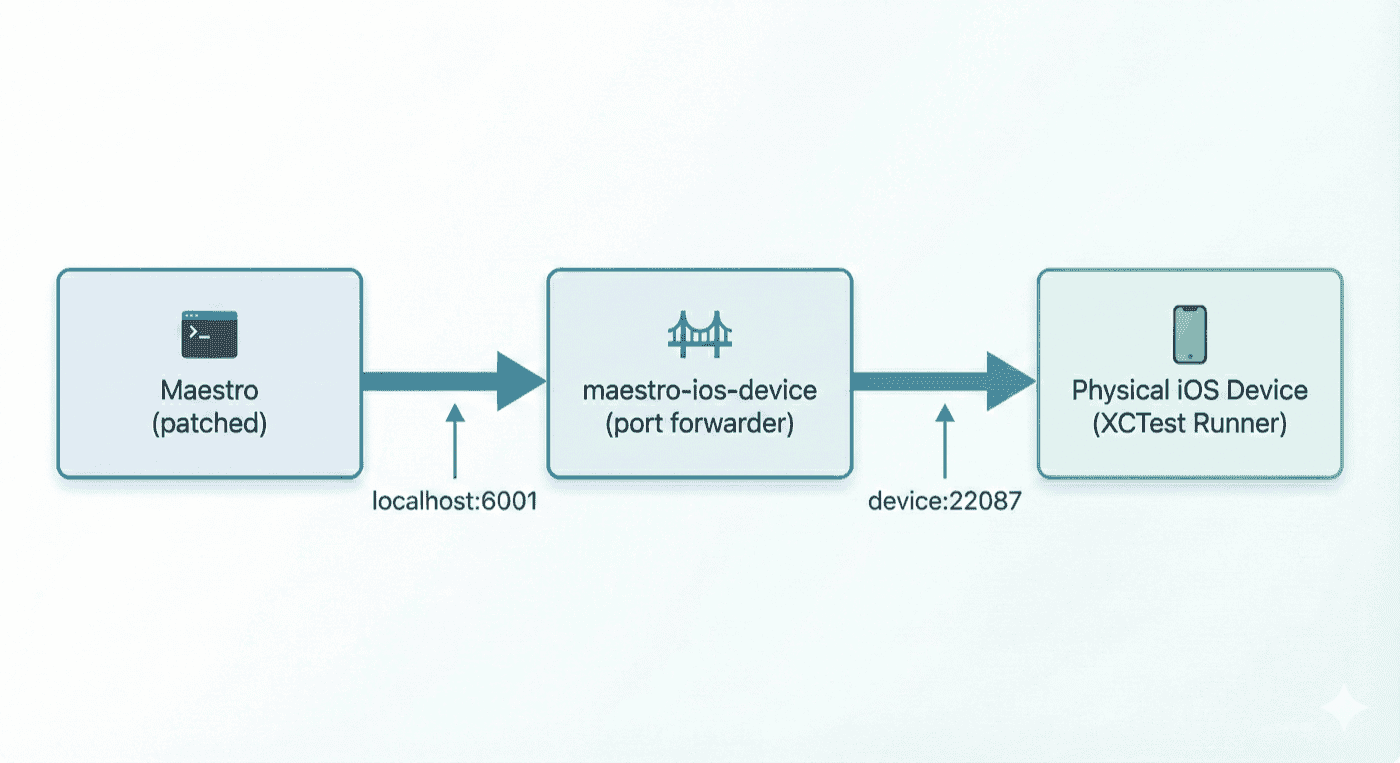

Maestro on Real iOS Devices: The Complete Guide

Maestro doesn't officially support physical iPhones. Here's a working solution.